Introduction: Cooling Becomes a Strategic Constraint

AI workloads are rewriting the rules for datacenter design. As generative AI, hyperscale cloud services, and real-time inferencing proliferate, the thermal loads inside data halls are reaching levels that traditional cooling systems weren’t designed to manage. In this AI era, cooling is no longer an afterthought. It is a core strategic constraint that directly affects performance, uptime, and long-term scalability.

Legacy cooling approaches struggle under the concentrated heat from GPU-heavy racks. Datacenters built for AI must therefore treat thermal design as fundamental infrastructure, not an optimization task at the end of the project.

Why AI Workloads Redefine Thermal Design

AI workloads generate heat patterns very different from traditional enterprise or batch computing. Modern GPU clusters run at high utilization for extended periods, producing sustained, high-intensity heat rather than periodic or cyclical spikes.

This is compounded by a broader trend in datacenter power demand. According to Gartner, electricity demand for datacenters worldwide is projected to grow 16% in 2025 and double by 2030. This growth is significantly driven by AI-optimized servers, which are expected to account for an increasing share of total power usage over the decade.

As power densities rise, so do thermal challenges, outpacing the capacity of airflow-centric designs to maintain stability.

AI thermal challenges are further amplified by workload variability. Training, fine-tuning, and inference place very different thermal demands on the same infrastructure. Training workloads often drive sustained peak power draw, while inference introduces spiky, latency-sensitive demand. Cooling systems designed around static or average loads struggle to maintain thermal stability across these shifts.

AI-ready infrastructure must support both steady-state and peak demand dynamically, rather than relying on predictable enterprise workload assumptions.

High-Density Racks: Where Cooling Fails First

Thermal stress doesn’t distribute evenly across a data hall. The hottest spots are concentrated at the rack level where GPUs and accelerators are stacked densely. Traditional aisle-based airflow cooling can reduce ambient temperature, but often fails to remove heat effectively from within tightly packed equipment.

Datacenter cooling systems are not a negligible portion of total energy consumption: This means that inefficient thermal design not only threatens reliability but also drives operational costs and sustainability challenges.

The problem is systemic: increases in server power do not translate into linear increments in airflow cooling capacity, because airflow only moves heat away from components rather than removing it efficiently at the source.

A critical challenge in high-density AI environments is that thermal risk is often masked by room-level metrics. Even when ambient temperatures appear within acceptable ranges, localized hotspots can form deep within racks where accelerators are densely packed. This creates a false sense of safety and delays intervention.

This is why rack-level and component-adjacent monitoring becomes essential as density increases, since room-based sensing alone is insufficient.

Cooling Models for AI Datacenters: Choosing the Right Fit

No single cooling strategy is perfect for every datacenter profile especially with AI workloads that vary widely in density and duty cycle. Instead, operators are adopting layered approaches that combine multiple technologies depending on rack density, retrofit constraints, and sustainability goals.

Below are the primary cooling technologies shaping AI-ready facilities:

Direct-to-Chip (Cold Plate) Cooling

Direct-to-Chip (D2C), also known as cold plate cooling, circulates liquid coolant directly to the hottest components – typically GPUs and CPUs – removing heat at the source. By transferring heat more efficiently than air, D2C supports significantly higher rack densities while reducing airflow dependency.

It is increasingly becoming the de facto choice for racks exceeding traditional density thresholds.

Immersion Cooling (Single-Phase & Two-Phase)

Immersion cooling submerges servers in dielectric fluid that absorbs heat directly from components.

- Single-phase immersion relies on fluid circulation without phase change.

- Two-phase immersion leverages boiling and condensation cycles for even higher thermal efficiency.

Immersion cooling supports extreme density designs and enables very high heat removal performance. However, it requires significant shifts in operational practices, maintenance models, and facility layout.

Rear Door Heat Exchangers (RDHx)

Rear Door Heat Exchangers (RDHx) mount liquid-cooled heat exchangers at the back of racks, capturing heat as hot air exits servers. This approach enhances traditional air-cooled environments by removing heat before it re-enters the room.

RDHx is often considered a strong transitional or retrofit-friendly technology for facilities moving toward higher densities without full liquid redesign.

Hybrid & Modular Liquid Cooling Architectures

Many AI datacenters are now deploying hybrid models that combine air cooling, RDHx, and direct-to-chip liquid systems within the same facility. Modular liquid distribution units (LDUs) allow phased adoption, enabling operators to scale cooling infrastructure as AI density increases.

Hybrid approaches offer practical scalability, balancing upfront investment with long-term flexibility while reducing the risk of stranded infrastructure.

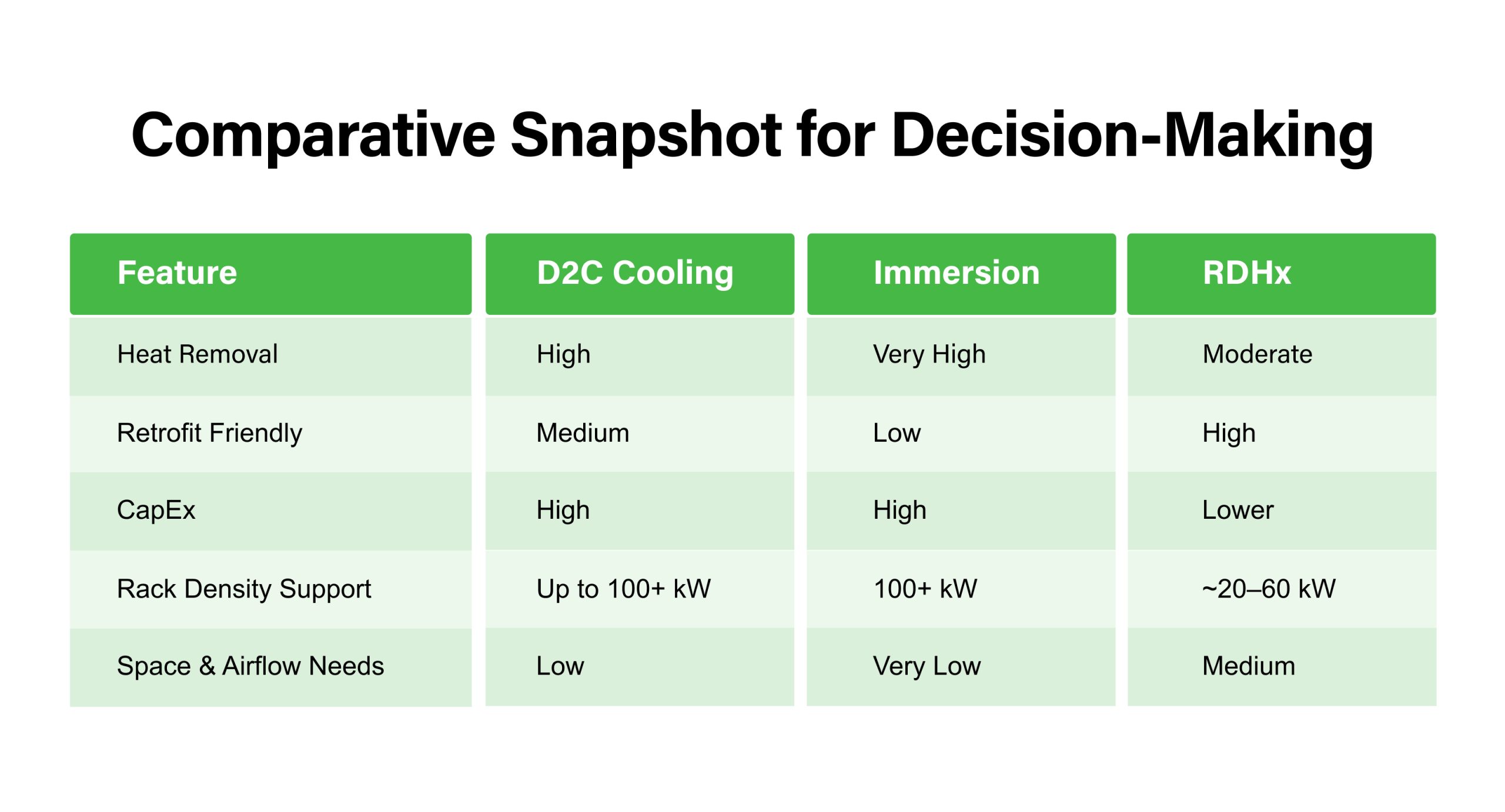

This comparison highlights that cooling decisions must align with density targets, retrofit constraints, capital budgets, and operational readiness.

Cooling Cannot Be Designed in Isolation

Modern datacenter design requires that cooling, power, and rack layout be planned together. Disparate planning, where cooling capacity is sized after racks and power distribution, leads to inefficiencies, local hotspots, increased risk of failure, and wasted capital expenditure.

Datacenters must rethink facilities as integrated systems, where cooling is dimensioned in lockstep with power delivery and compute density.

When electrical, mechanical, and thermal layers are not coordinated, inefficiencies compound across energy use, operational risk, and sustainability outcomes.

Efficiency, Sustainability, and Intelligent Thermal Control

AI workloads elevate the importance of intelligent thermal control not just for uptime, but for efficiency and environmental performance. Efficient cooling systems directly reduce energy consumption and improve metrics like Power Usage Effectiveness (PUE).

With AI driving expansion, Gartner warns that datacenter power demand is on track to nearly double by 2030, highlighting how inefficiencies today will be magnified tomorrow. If cooling systems remain stranded behind compute growth, energy and emissions targets will be unattainable.

Intelligent, real-time monitoring enables dynamic thermal optimization shifting cooling resources where they are needed most, and coordinating with workload placement and power availability. These systems use AI and analytics to reduce overcooling and eliminate hot spots, driving down both costs and carbon intensity.

In advanced AI facilities, intelligent thermal control increasingly intersects with workload orchestration. Real-time telemetry enables cooling systems to coordinate with compute scheduling and power availability, shifting workloads away from thermal constraints and reducing localized stress.

Designing Cooling for What Comes Next

AI hardware is evolving faster than fixed, monolithic cooling assumptions. Datacenters focused solely on today’s density targets risk expensive, disruptive retrofits as racks densify, AI models get larger, and power budgets expand.

Future-ready cooling emphasizes:

- Flexibility: Designs that support a range of density profiles without redesign.

- Operational Skills: Training and tooling for liquid and immersion cooling management.

- Sustainable Resource Use: Water and energy constraints will shape what cooling models are viable in different regions.

This approach reduces the need for reactive upgrades and enables facilities to adapt as compute and thermal demands evolve.

Conclusion: Thermal Readiness Defines AI Readiness

Cooling strategy is no longer a back-of-house engineering detail. In the era of high-density AI compute, thermal readiness equals AI readiness. Facilities that prioritize integrated cooling, alongside power and compute planning, will see better performance, lower operating costs, greater sustainability, and reduced risk.

Effective cooling infrastructure is now a long-term strategic investment that defines whether datacenters can support the next generation of digital innovation at scale.

Priya Patil, VP & National Head - Strategic Accounts, CtrlS Datacenters

Priya is the National Head of Strategic Colocation at CtrlS, with deep expertise in the IT & ITeS industry. She drives scalable growth through strong sales strategy, customer relationships, and IT service management. Known for her consultative approach, Priya builds high-value partnerships while blending strategic vision with operational excellence to deliver sustainable impact across regions.