Why Multi-Cloud Predictability Is Now a Strategic Imperative

Cloud cost conversations often start with “cut spend” and end with teams feeling blocked. The better goal is predictability: the ability for finance to forecast cloud costs with confidence and for engineering to keep shipping.

For many boards, the question is no longer “Are we optimizing cloud?”

It is:

“Can we forecast it with confidence?”

The real CFO–CIO pact is turning cloud from a surprise invoice into a managed, measurable operating model.

Why now? Because the cloud is no longer a side line item. As overall IT spending rises, cloud and AI infrastructure are pulling more budget share and scrutiny.

Below is a practical path to predictable multi-cloud spend, grounded in FinOps principles and designed for both business and technology leaders, and how a managed service like CtrlS Cloud Optimize can accelerate it.

Why Predictability, Not Cost Cutting – Is the Real Objective

Cost cutting is episodic. Predictability is operational.

Cost cutting happens after a spike. Predictability prevents the spike from becoming a surprise.

In AI-era enterprises, where infrastructure underpins digital revenue, what finance needs is not a lower number. It needs a credible number.

When cloud becomes predictable:

- Budgets are built on demand drivers, not padding.

- Trade-offs between performance, resilience, and cost become explicit.

- Product P&Ls reflect infrastructure realities.

- Engineering teams move faster because guardrails replace approvals.

Most organizations still treat cloud optimization as a reactive exercise. The more mature ones treat it as an operating discipline, often structured through FinOps.

But maturity requires more than dashboards.

Why Multi-Cloud Has Become Harder to Forecast – Especially in 2026

Multi-cloud was originally adopted for flexibility, resilience, and innovation. But flexibility without economic discipline introduces variability.

Now layer AI on top.

GPU training clusters scale aggressively during experimentation. Inference workloads create steady-state cost baselines. Data movement across clouds generates unpredictable egress charges. Storage expands as model iterations accumulate.

The result is not just higher spend, it is nonlinear spend.

This is where many organizations experience tension between finance and technology.

The CFO sees volatility.

The CIO sees velocity.

Bridging that gap requires structural clarity.

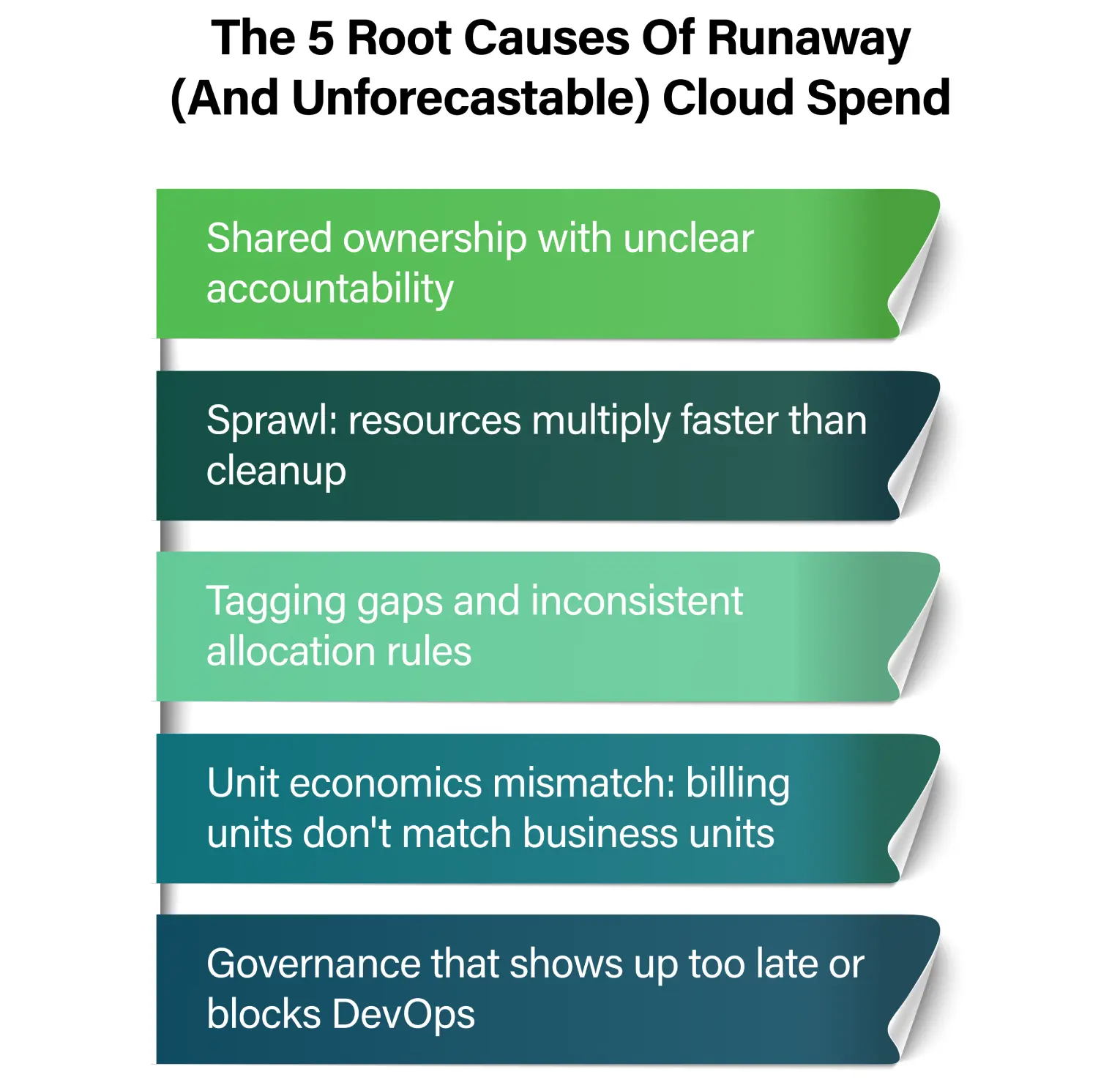

The Five Structural Causes of Unpredictable Cloud Spend

These are not tactical problems. They are systemic.

1. Shared Ownership Without Unit Accountability

In multi-cloud environments, the platform team may “own” the bill, application teams drive consumption, and finance owns the forecast. But no one owns the economics.

When cost per transaction or per active user is unclear, the bill appears random.

Consider an AI-powered product. If the cost per inference request rises quietly due to architectural drift, finance sees margin compression before engineering sees cost inflation.

Predictability begins when cloud is expressed in business terms.

2. Sprawl That Outpaces Hygiene

Dev/test environments linger. Storage expands silently. Temporary GPU clusters become permanent capacity.

Sprawl is rarely reckless, it is usually ungoverned.

In one enterprise environment, non-production workloads accounted for nearly 35% of total compute consumption, yet were not tied to any business metric.

Without structural cleanup mechanisms, forecasting becomes backward-looking instead of forward-looking.

3. Tagging Gaps That Break Allocation Integrity

If you cannot answer “who consumed this and why,” you cannot forecast it.

Missing tags, inconsistent naming conventions, and shared resource ambiguity create “unallocated” cost buckets. Finance then estimates. Engineering disputes. Trust erodes.

Predictability is built on allocation accuracy.

4. Unit Economics That Don’t Map to Business Drivers

Cloud bills in vCPU-hours, IOPS, and GB egress.

The business measures revenue per customer, cost per API call, or time-to-market.

When those metrics do not connect, forecasting becomes speculative.

Mature organizations translate infrastructure consumption into unit-cost KPIs: cost per transaction, cost per model training run, cost per customer served.

When demand scales, cost projections follow logically.

5. Governance That Arrives Too Late, or Blocks Delivery

Cost reviews after deployment create reactive cleanup cycles.

Overly strict approval gates push teams toward shadow environments.

The goal is neither heavy control nor loose autonomy. It is embedded discipline.

Predictability emerges when governance is part of the engineering workflow, not layered on top of it.

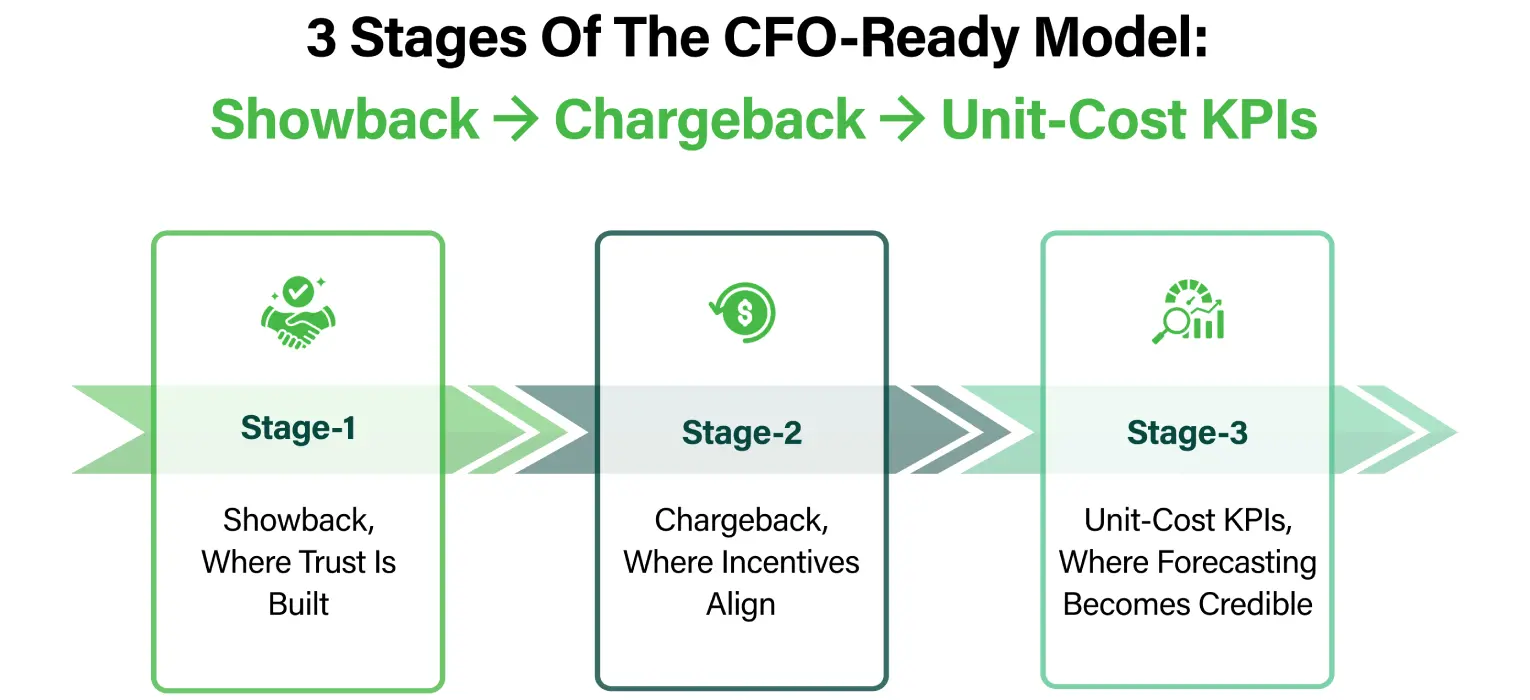

The Maturity Path: From Visibility to Financial Engineering

Most organizations attempt to jump directly to chargeback models.

That often fails.

Without visibility maturity, internal billing creates friction instead of accountability.

Trust must precede enforcement.

Stage 1: Showback, Where Trust Is Built

The goal here is credibility, not penalties.

Spend becomes visible by application, product, or business unit. Unallocated costs are exposed transparently. Variance drivers are explained.

In one organization, simply introducing structured showback reduced “mystery spend” by over 15%, without cutting a single workload, because teams finally saw the linkage between architecture decisions and financial impact.

Showback aligns finance and engineering around facts.

Stage 2: Chargeback, Where Incentives Align

Only once visibility stabilizes does accountability become productive.

Shared costs are allocated using rational rules. Product teams incorporate infrastructure cost into margin discussions. Budget guardrails trigger alerts instead of surprise escalations.

This is where cloud shifts from IT overhead to operating discipline.

Stage 3: Unit-Cost KPIs, Where Forecasting Becomes Credible

Forecasting based on last month’s invoice is reactive.

Forecasting based on business demand drivers is strategic.

When cost per transaction, per active user, or per model run becomes measurable, cloud spend can be projected alongside product growth.

If transaction volume increases 20%, infrastructure cost projections follow proportionally, unless architectural inefficiencies distort the curve.

That is when cloud economics becomes an executive conversation.

Governance in the AI Era: Guardrails Over Gatekeepers

Modern governance cannot rely on manual approvals.

Instead, mature organizations embed financial controls into engineering systems.

Tagging standards are enforced at provisioning. Non-compliant resources are flagged automatically. Budget thresholds generate alerts before overruns escalate.

AI workloads especially benefit from automated discipline.

For example, non-production GPU clusters can be scheduled to shut down automatically after experiment windows. Rightsizing recommendations can be routed directly to service owners. Commitment management can be optimized centrally to reduce idle reservation risk.

Governance should reduce friction, not create it.

A practical “no-drama” governance stack looks like this:

1) Tagging standards that teams can actually follow

Minimum set (start here):

- App/Product

- Environment (prod/non-prod)

- Cost center / Business unit

- Owner (team DL or service owner)

- Project/Initiative

Then enforce:

- Tag policies at provisioning time (IaC, templates, pipelines).

- A default quarantine pattern for non-compliant resources (not an outage—just restricted growth).

2) Guardrails over approvals

Replace manual approvals with policy:

- Allowed instance families by environment

- Pre-approved blueprints for common stacks

- Budget thresholds that trigger alerts, not tickets

- Exceptions as code (time-bound, reviewed)

This aligns with the idea of “FinOps as code” embedding cost controls into engineering workflows so optimization is continuous, not episodic

3) Automation that keeps humans out of routine waste

Examples:

- Scheduling non-prod shutdowns

- Rightsizing recommendations routed to owners

- Detecting idle/overprovisioned resources

- Commitment management (reservations/savings plans) with guardrails

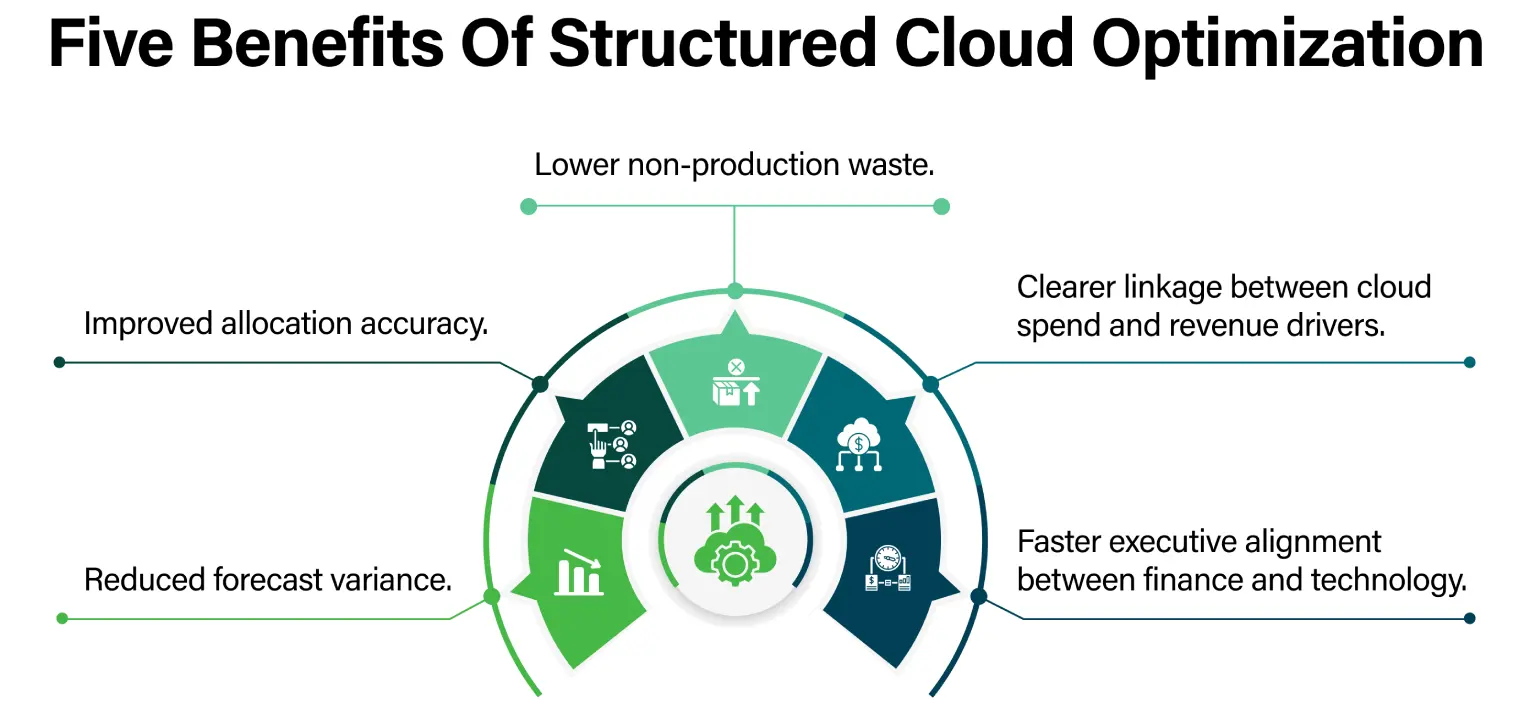

Where CtrlS Cloud Optimize Changes the Equation

Many enterprises understand these principles.

Few sustain them.

The difference lies in operationalizing them consistently across multi-cloud environments, especially when AI workloads and hybrid infrastructure complicate visibility.

CtrlS Cloud Optimize is designed not merely as a cost management dashboard, but as an operating system for cloud predictability.

Managed cloud optimization helps by turning best practices into a repeatable system:

1.Policy & governance implementation

Tagging standards, allocation rules, guardrails, exception workflows

(Aligned with modern cloud governance direction.)

2.Unified visibility across multi-cloud

A single view of spend drivers, anomalies, and allocation health

(Cost transparency/visibility and governance enablement as the core capabilities of cloud cost management solutions.)

3. Automation

“FinOps as code” patterns: embed cost controls into pipelines and provisioning.

4. Forecasting

Trend + seasonality, plus business-driver forecasting once unit-cost KPIs are in place

Cloud Optimize helps transform best practices into institutional rhythm.

The Real Pact

The CFO–CIO pact is not about restricting innovation.

It is about engineering financial confidence into digital expansion.

In 2026, as AI infrastructure intensifies consumption curves and multi-cloud environments deepen complexity, predictability becomes competitive advantage.

Enterprises that treat cloud as an operating model, not a monthly invoice, will scale with clarity.

Those that do not will continue negotiating surprises.

The question is no longer whether to optimize.

It is whether you can forecast with conviction.

Cloud Predictability Assessment

If your cloud forecast still relies on padding instead of demand drivers, a structured assessment can reveal:

- Allocation accuracy gaps

- Sprawl hotspots

- AI workload inefficiencies

- Governance friction points

- Forecast maturity stage

Predictability is achievable, without slowing teams.

It simply requires turning cloud from infrastructure into engineered economics.

Venkat Subrahmanyam, Vice President – Service Delivery, Managed Services, CtrlS Datacenters

With over 18 years of experience driving large-scale technology transformation and business growth, Venkat is a results-oriented leader who has led complex, multi-domain programs across datacenter services, cloud, cybersecurity, and infrastructure operations. He brings deep expertise in end-to-end transition and transformation, ensuring measurable ROI, governance excellence, and delivery efficiency. Venkat has extensive experience managing MeitY portfolios, aligning operations with regulatory and national digital mandates. Known for his strategic influence, he mentors high-performing teams and builds strong C-suite partnerships.