Rack density has become one of the most strategic design choices in modern datacenters. As AI and accelerated computing push infrastructure limits, CIOs increasingly find that density decisions define how far digital ambition can scale.

You have secured the power capacity. You have the GPUs on order. And then the deployment stalls anyway — because nobody planned what happens when 80 kilowatts needs to live in a rack that was designed for 10.

That is the rack density problem. And it lands squarely on the CIO’s desk.

Infrastructure no longer sits behind the business. It shapes how fast models train, how reliably platforms scale, and how resilient operations remain under pressure.

Why rack density is now a CIO-level decision

In AI-driven environments, rack density is no longer a technical parameter managed in isolation. It directly influences how quickly a CIO can deploy AI workloads, how efficiently capital converts into compute, and how reliably digital platforms operate under pressure.

If power is the foundation of AI infrastructure, rack density determines how effectively that power is translated into usable performance.

Done right, planning right rack density helps CIOs:

- Reduce total cost of ownership (TCO)

- Accelerate workload deployment timelines

- Improve sustained application performance

- Protect uptime SLAs

- Avoid expensive retrofits

Done poorly, it increases operational risk and hidden costs.

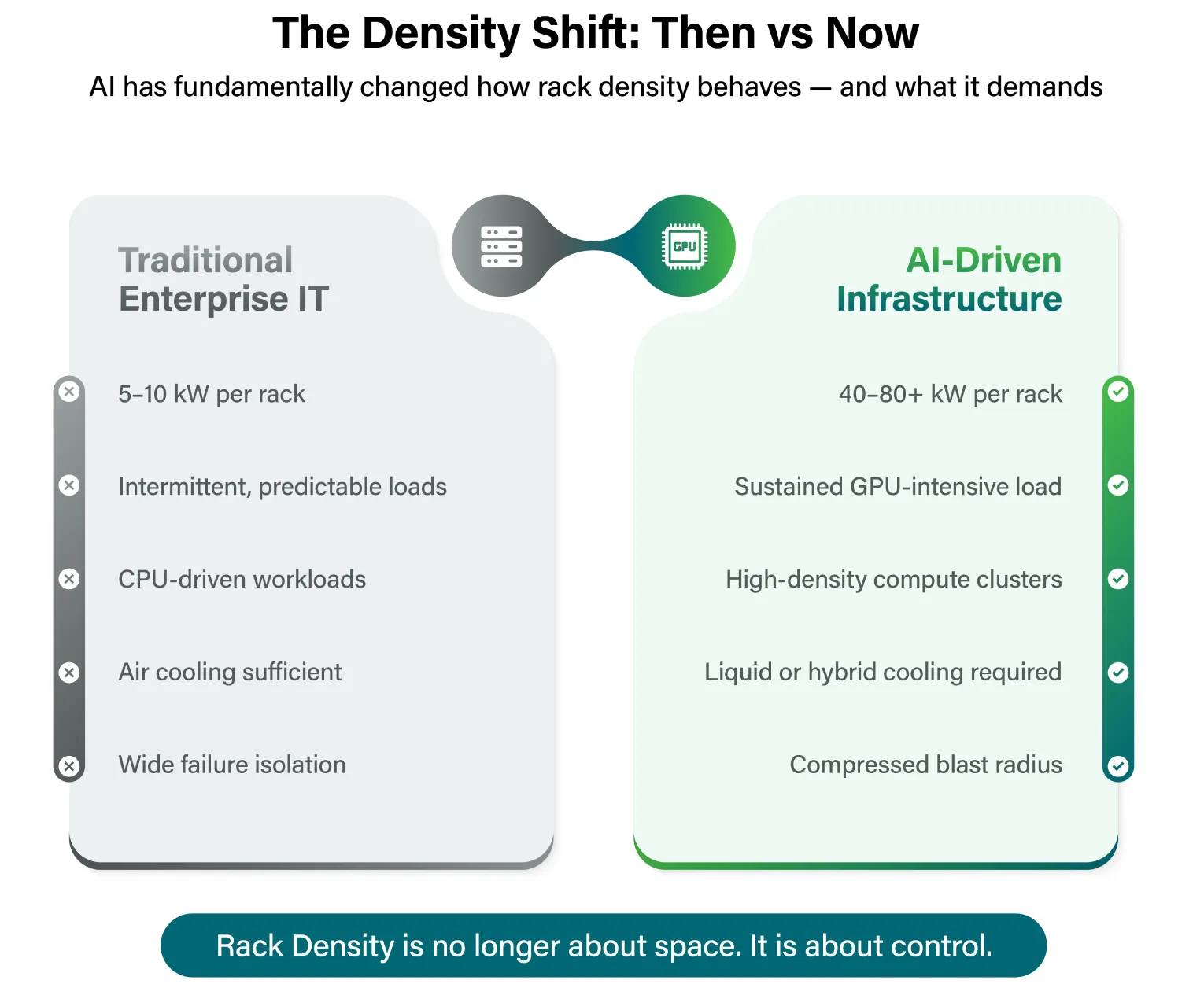

The Density Shift: From 5–10 kW to AI-Scale Compute

Traditional enterprise environments typically operated at 5–10 kW per rack. AI clusters and GPU-heavy deployments now push racks to 40, 60, or even higher kilowatt ranges.

The shift is not incremental. It changes:

- Thermal behavior

- Failure impact radius

- Maintenance complexity

- Cost structure

- Expansion flexibility

For CIOs, this means density is no longer about “fitting more into a rack.” It is about structuring infrastructure to support AI growth without increasing risk.

Rack Density as a Business Lever

1. Reducing Total Cost of Ownership

Higher density improves space utilization – but that alone does not guarantee lower cost.

True TCO reduction comes when density planning:

- Aligns rack power with real workload demand

- Avoids over-provisioning electrical infrastructure

- Minimizes stranded capacity

- Prevents premature expansion of data hall footprint

The cost traps are easy to miss. Power and cooling upgrades increase capital expenditure as density rises. Energy costs grow when load increases faster than efficiency improvements. Operational complexity adds indirect cost without automation and standardized processes. CIOs who model density across a full lifecycle — not just initial deployment — build sustainable cost

2. Expediting AI Deployment and Business Timelines

One of the most overlooked impacts of rack density is deployment velocity.

When density planning is misaligned with power and cooling realities:

- AI clusters stall despite available floor space

- New GPU deployments require redesign

- Business timelines slip

When density is workload-led and pre-modeled:

- AI pods can be deployed faster

- Expansion happens in predictable phases

- Infrastructure keeps pace with business demand

CIOs who align density with forward workload forecasts — validating power and cooling assumptions at each phase before committing to permanent layout changes — preserve expansion flexibility and keep deployment on schedule. For CIOs measured on digital acceleration, density planning is fundamentally a timeline enabler.

3. Protecting Performance KPIs Under Sustained Load

AI workloads do not behave like traditional enterprise IT. They generate sustained, high-intensity compute loads.

In dense racks:

- Airflow imbalance can cause thermal throttling

- Cooling lag can reduce sustained GPU throughput

- Uneven heat distribution creates performance variability

Even without outages, performance degradation can affect model training cycles and inference reliability.

CIOs must treat rack-level thermal and airflow engineering as performance control mechanisms, not background facilities design.

4. Managing Risk in Compressed Environments

High-density environments compress infrastructure into smaller physical footprints. This increases the “blast radius” of localized issues.

In dense rows:

- A single cooling disruption can impact multiple workloads

- Maintenance windows shrink

- Fault isolation becomes more complex

- Human error carries higher consequence

Density does not inherently increase risk – unmanaged density does.

Resilient design requires:

- Clear zoning strategies

- Coordinated redundancy across layers

- Structured maintenance planning

- Operational discipline

For CIOs accountable for uptime SLAs, density planning is fundamentally a risk management decision.

How Density Impacts Operational Efficiency

Beyond design, density changes day-to-day execution.

In tightly packed racks:

- Physical access is constrained

- Change management requires greater precision

- Maintenance scheduling becomes more interdependent

Without strong monitoring and standardized processes, operational complexity rises — increasing indirect cost.

Dense environments demand:

- Granular monitoring at rack level

- Real-time visibility into temperature and utilization

- Predictive capacity modeling

- Tighter change control

Operational maturity determines whether density reduces cost or quietly increases it.

Phased Scaling: Avoiding the Overpacking Trap

Many organizations make one of two mistakes:

- Overpacking today to maximize space

- Under-planning for future AI growth

The smarter approach is phased density scaling:

- Validate power and cooling assumptions

- Stress-test sustained AI load behavior

- Expand in structured increments

CIOs who align density with future workload forecasts preserve expansion flexibility and avoid costly redesign cycles.

Planning Density as Part of an AI Infrastructure Strategy

Rack density does not exist in isolation.. Rack density is the structural layer between power capacity and thermal design. It determines how efficiently electrical capacity converts into reliable compute output.

It connects directly to power architecture. CIOs who plan these three dimensions together, rather than sequentially, avoid the retrofits and redesigns that compress deployment timelines and inflate cost.

The organisations that are scaling AI most effectively are not those with the highest rack densities. They are the ones that matched density to workload profile, planned power and cooling alongside compute, and preserved the flexibility to evolve as AI hardware and business requirements change.

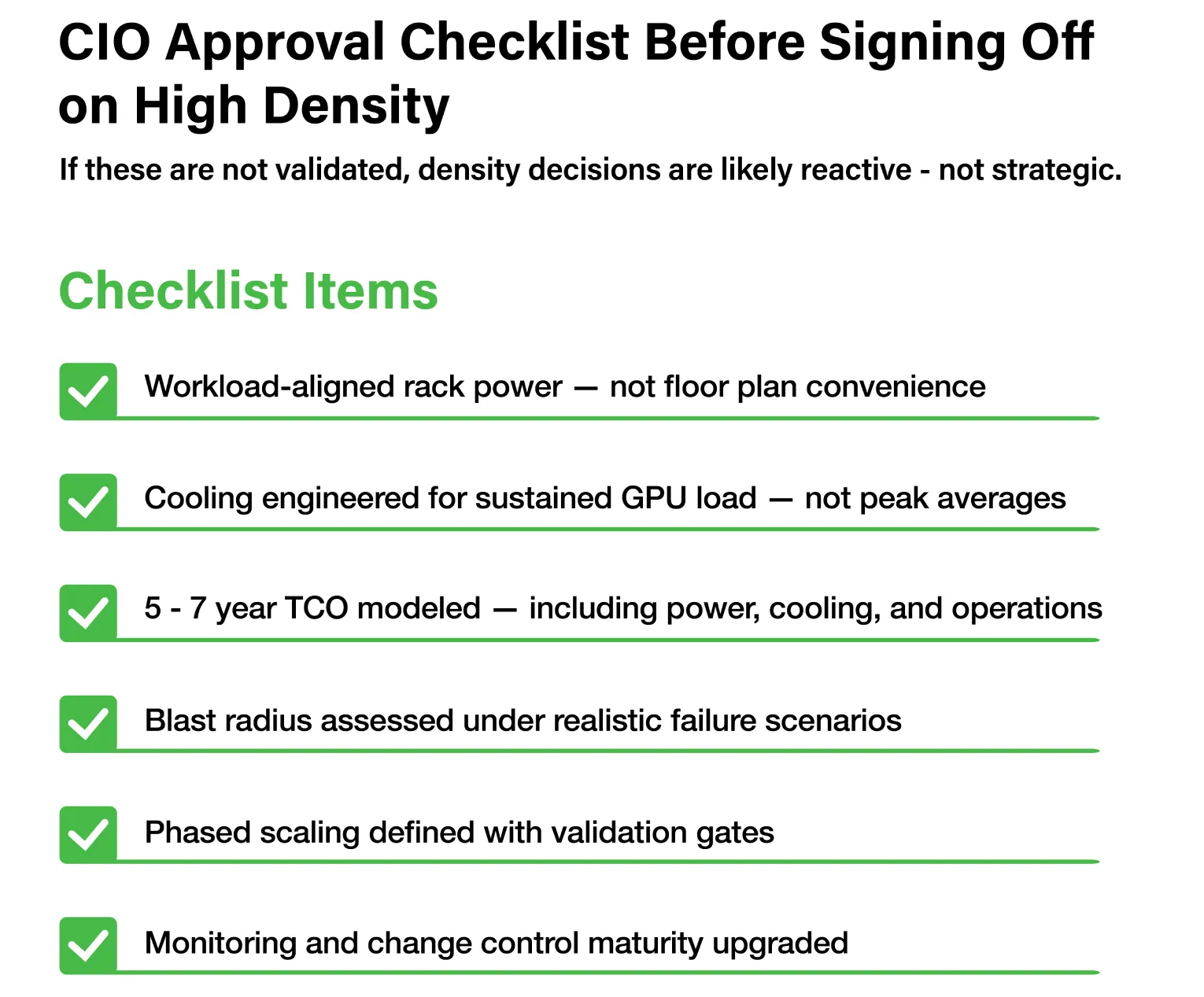

CIO Checklist: Evaluating Rack Density Strategy

Before approving high-density deployments, CIOs should ask:

- Does rack power align with actual AI workload profiles – or with floor plan convenience?

- Are cooling systems engineered for sustained GPU loads, not peak averages?

- Has TCO been modelled over a five-to-seven year horizon, including power, cooling, and operational overhead?

- Is the blast radius understood and designed for under realistic failure scenarios?

- Will this density design accelerate or constrain future expansion?

- Has phased scaling been built into the deployment plan, with validation gates before each increment?

If these answers are unclear, density decisions are likely reactive rather than strategic, and the cost of that gap will surface in Year 2, not Year 1.

Conclusion

AI ambition is easy to articulate. The infrastructure required to sustain it is harder to build — and rack density is one of the places where that gap becomes visible fastest.

CIOs who engage density planning early are not just making a better infrastructure decision. They are protecting the organisation’s ability to move at the speed AI demands. In an environment where deployment delays, performance degradation, and cost overruns can all trace back to decisions made at the rack level, density planning is not a technical detail to delegate. It is a strategic discipline to own.

Learn more about how CtrlS is building future-ready data center infrastructure at: https://www.ctrls.com

Priya Patil, VP & National Head - Strategic Accounts, CtrlS Datacenters

Priya is the National Head of Strategic Colocation at CtrlS, with deep expertise in the IT & ITeS industry. She drives scalable growth through strong sales strategy, customer relationships, and IT service management. Known for her consultative approach, Priya builds high-value partnerships while blending strategic vision with operational excellence to deliver sustainable impact across regions.