Introduction: Why Power Has Moved to the Center of AI Infrastructure

Artificial intelligence is fundamentally reshaping data-center design, and with it, redefining the very fabric of how power has been historically consumed and managed.

Unlike traditional enterprise workloads, AI training and inference place sustained, high-intensity demands on electrical infrastructure. Rack densities are rising sharply, power draw is less predictable, and even short-duration spikes can strain legacy systems.

As a result, power availability, stability, and scalability now directly influence AI deployment timelines. Projects are delayed not by lack of compute hardware, but by insufficient electrical capacity, utility constraints, or suboptimal power architectures. What was once a facilities concern is now a strategic priority for CIOs, CTOs, and infrastructure leaders.

Data-center power design has moved from the background to the very center of enterprise AI readiness.

From Traditional IT Loads to AI-Driven High-Density Power Demands

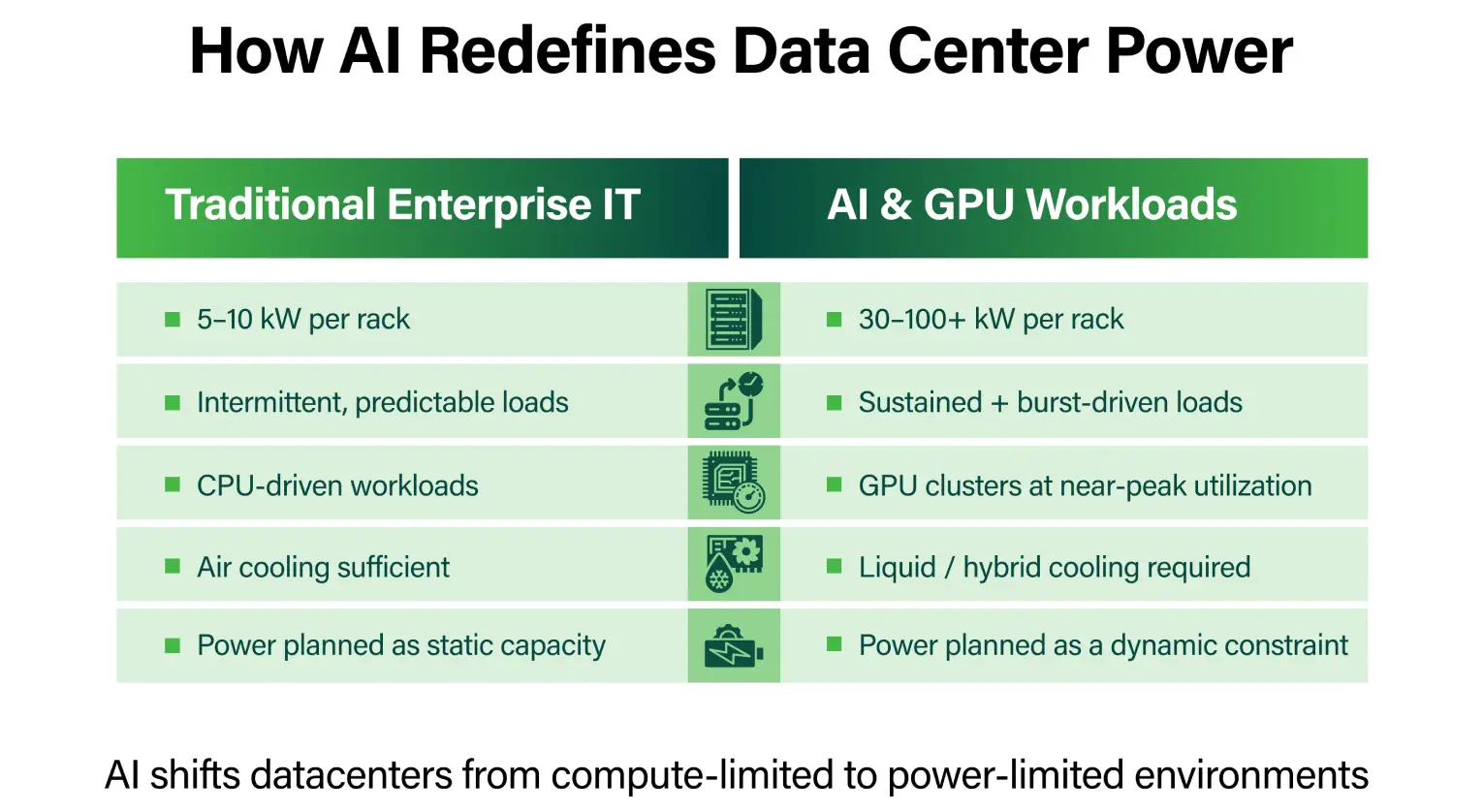

For decades, most data centers were designed around rack densities in the range of 5–10 kW. That assumption no longer holds. Modern AI clusters, driven by GPU-accelerated workloads, routinely push rack densities well beyond those limits. High-performance GPUs operate at high utilization for extended periods, creating sustained power draw rather than intermittent peaks.

AI training workloads amplify this effect, consuming large amounts of power continuously, while inference introduces rapid variability as demand fluctuates in real time. Both patterns stress power systems in different ways, exposing the limitations of traditional designs.

While both AI training and inference strain power systems, their electrical profiles differ in ways that materially affect infrastructure design.

AI training workloads typically generate long-duration, steady-state power draw, with GPUs operating near peak utilization for hours or days. This sustained demand stresses upstream capacity planning, utility provisioning, and thermal equilibrium.

In contrast, AI inference introduces highly variable and burst-driven power behavior, driven by real-time user demand, model invocation patterns, and workload orchestration. These rapid fluctuations place disproportionate stress on power distribution equipment, UPS systems, and voltage regulation mechanisms.

Designing for AI therefore requires supporting both continuous high-load operation and rapid load variability, a combination rarely encountered in traditional enterprise IT environments.

This shift is making power capacity one of the primary constraints in AI infrastructure planning. According to Gartner, organizations increasingly underestimate the electrical implications of AI initiatives, leading to under-provisioned facilities and delayed deployments.

Modern Data Center Power Infrastructure: Core Components and Architectures

At the foundation of AI-ready data centers are high-capacity utility feeds and on-site substations capable of supporting significantly higher loads.

Within the facility, electrical distribution must scale efficiently. Modern architectures rely on advanced switchgear, overhead or underfloor busways, and intelligent power distribution units (PDUs) that provide granular visibility and control. These components enable operators to distribute power flexibly as rack densities evolve.

Uninterruptible power supply (UPS) systems also face new demands. Supporting higher loads without sacrificing efficiency is essential, particularly as AI clusters scale. Modular UPS designs are increasingly favored, allowing capacity to be added incrementally while maintaining high efficiency across varying load levels.

Higher-voltage distribution models are gaining traction because they reduce current flow for the same power level, lowering resistive losses and improving efficiency across power distribution paths. This becomes increasingly important as rack-level power climbs into tens of kilowatts.

Direct current (DC) power architectures are also being explored in AI-focused environments, particularly where workloads are highly concentrated. By minimizing repeated AC-to-DC conversions, these models can improve efficiency and reduce thermal overhead. However, DC distribution requires careful consideration of safety, standardization, and operational complexity, and is typically applied selectively rather than universally.

Power, Cooling, and Transient Loads: Engineering for AI Workloads

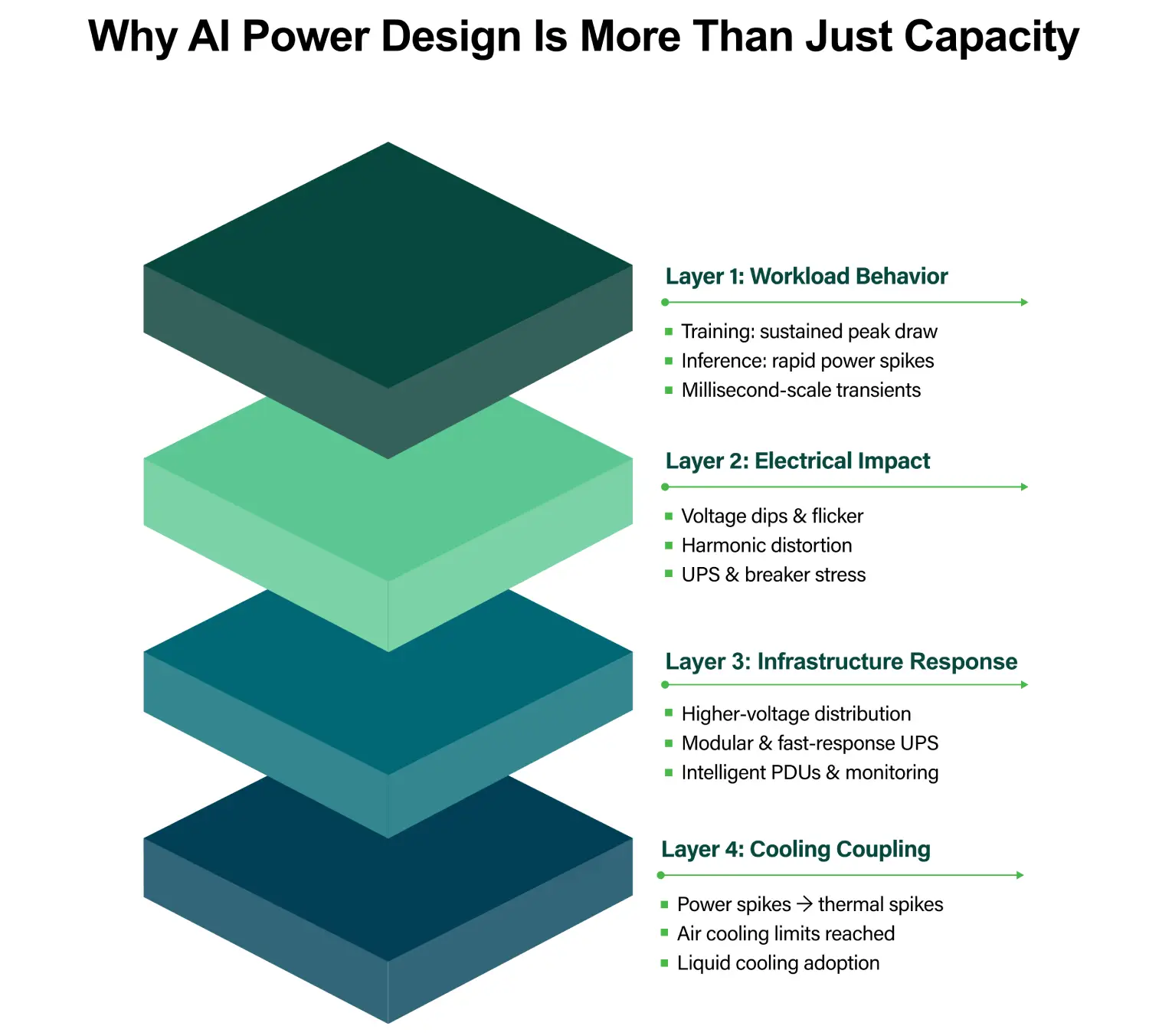

One of the defining challenges of AI infrastructure is the interaction between power and cooling. AI workloads introduce rapid and sometimes unpredictable changes in power demand, creating transient loads that place additional stress on electrical systems.

Transient loads in AI environments occur over very short time intervals – often milliseconds to seconds – when GPUs rapidly shift between idle, inference, and peak compute states. Unlike CPUs, modern GPUs can draw large increments of power almost instantaneously, creating sharp electrical spikes.

These transients can challenge circuit breakers, busways, and UPS systems that were designed for smoother load curves. If not properly managed, transient spikes may cause nuisance trips, voltage instability, or accelerated equipment wear, even when average power consumption remains within design limits.

As AI densities increase, transient load management becomes as critical as total capacity planning.

Power and cooling can no longer be designed as independent systems. As rack densities increase, traditional air cooling approaches struggle to remove concentrated heat efficiently. This has accelerated the adoption of liquid and hybrid cooling models, which offer superior thermal performance for high-density environments.

High-density GPU clusters are also more sensitive to power quality issues such as harmonics, voltage dips, and electrical flicker. Switching power supplies used in AI accelerators can introduce harmonic distortion, increasing losses and thermal stress within electrical distribution systems.

Even brief voltage sags, which are quite subtle in traditional IT environments, can lead to GPU throttling, performance instability, or node resets in AI clusters. These effects may not trigger full outages but can silently degrade training efficiency and inference reliability.

Maintaining tight power quality tolerances is therefore essential to preserving AI performance at scale.

Redundancy models also require reconsideration. Traditional redundancy models such as N+1 or 2N, while effective in conventional data centers, become increasingly costly and inefficient at extreme AI power densities. Replicating full electrical paths for very high-density racks can significantly increase CAPEX and reduce overall utilization.

As a result, some AI environments are adopting workload-aware redundancy strategies, where application-level resilience, distributed training architectures, or software-based fault tolerance partially offset lower physical redundancy. This represents a shift from infrastructure-only resilience to system-level availability design.

Energy Efficiency, Sustainability, and Intelligent Power Management

Rising AI power consumption has intensified scrutiny on energy efficiency. While Power Usage Effectiveness (PUE) remains a useful metric, it is no longer sufficient on its own.It simply does not capture how efficiently power is converted into AI outcomes. AI-era data centers increasingly require rack-level and workload-aligned efficiency metrics, such as power per training job, energy per inference request, or utilization-adjusted efficiency indicators.

These granular measurements enable operators to align infrastructure efficiency with actual AI productivity, rather than relying solely on facility-level averages that can obscure localized inefficiencies.

Granular power monitoring improves visibility and control, while dynamic power management aligns energy use with workload demand. Renewable energy integration and sustainable cooling strategies are becoming essential to support long-term growth.

Future-Proofing AI Data Centers: Design Strategies and Industry Challenges

Rack densities are expected to continue increasing with future AI hardware. Electrical and mechanical infrastructure must therefore be designed for upgradeability rather than fixed assumptions.

Utility power constraints and long approval cycles increasingly affect expansion plans, making early power strategy decisions critical. CAPEX investments must be balanced against long-term operational efficiency, particularly as AI infrastructure lifecycles shorten.

Standards-based designs, modular construction, and ecosystem partnerships help reduce complexity and deployment risk in an increasingly power-constrained environment.

Conclusion: Power as the Foundation of AI-Ready Data Centers

Future-ready power infrastructure enables scalable, resilient, and sustainable AI growth. There is a clear shift in data center design thinking from being compute-centric to power-centric. As AI adoption accelerates, power engineering is becoming a key differentiator for organizations building truly AI-ready data centers.

Learn more about how CtrlS is building future-ready data center infrastructure.

Priya Patil, VP & National Head - Strategic Accounts, CtrlS Datacenters

Priya is the National Head of Strategic Colocation at CtrlS, with deep expertise in the IT & ITeS industry. She drives scalable growth through strong sales strategy, customer relationships, and IT service management. Known for her consultative approach, Priya builds high-value partnerships while blending strategic vision with operational excellence to deliver sustainable impact across regions.