A financial services CTO approves an AI pilot.

The architecture appears manageable. Six autonomous agents are deployed to monitor transactions, flag compliance anomalies, assist customer support teams, and continuously update risk scoring models. Early results are promising. Customer queries are resolved faster, fraud detection improves, and several departments begin requesting similar agents for their own workflows.

Three months later, the finance team flags a concern.

The company’s cloud bill has nearly tripled.

There has been no dramatic increase in traffic and the number of deployed models remains roughly the same. Yet infrastructure costs continue to climb.

Engineers eventually identify the reason. The organization has quietly shifted from traditional AI inference pipelines to continuously running autonomous agents.

This is the fundamental infrastructure shift introduced by Agentic AI.

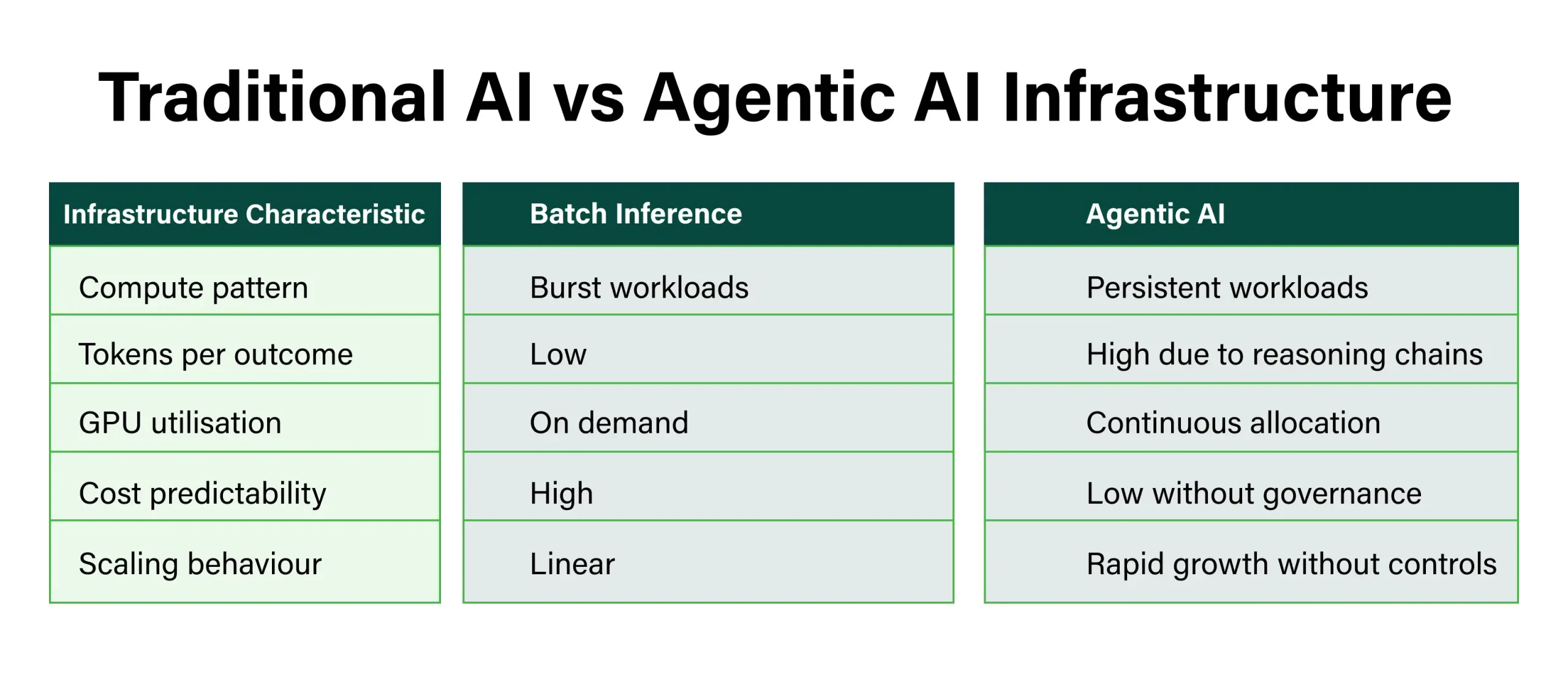

Most enterprises initially budget their AI strategy around batch inference, where prompts arrive in bursts and compute is released after each response. Autonomous agents operate differently. They run continuously, monitor events, trigger tools, and execute multi step reasoning chains until tasks are completed.

That shift fundamentally changes how infrastructure is consumed and how cloud costs scale at enterprise level.

1. What Makes Agentic AI Fundamentally Different

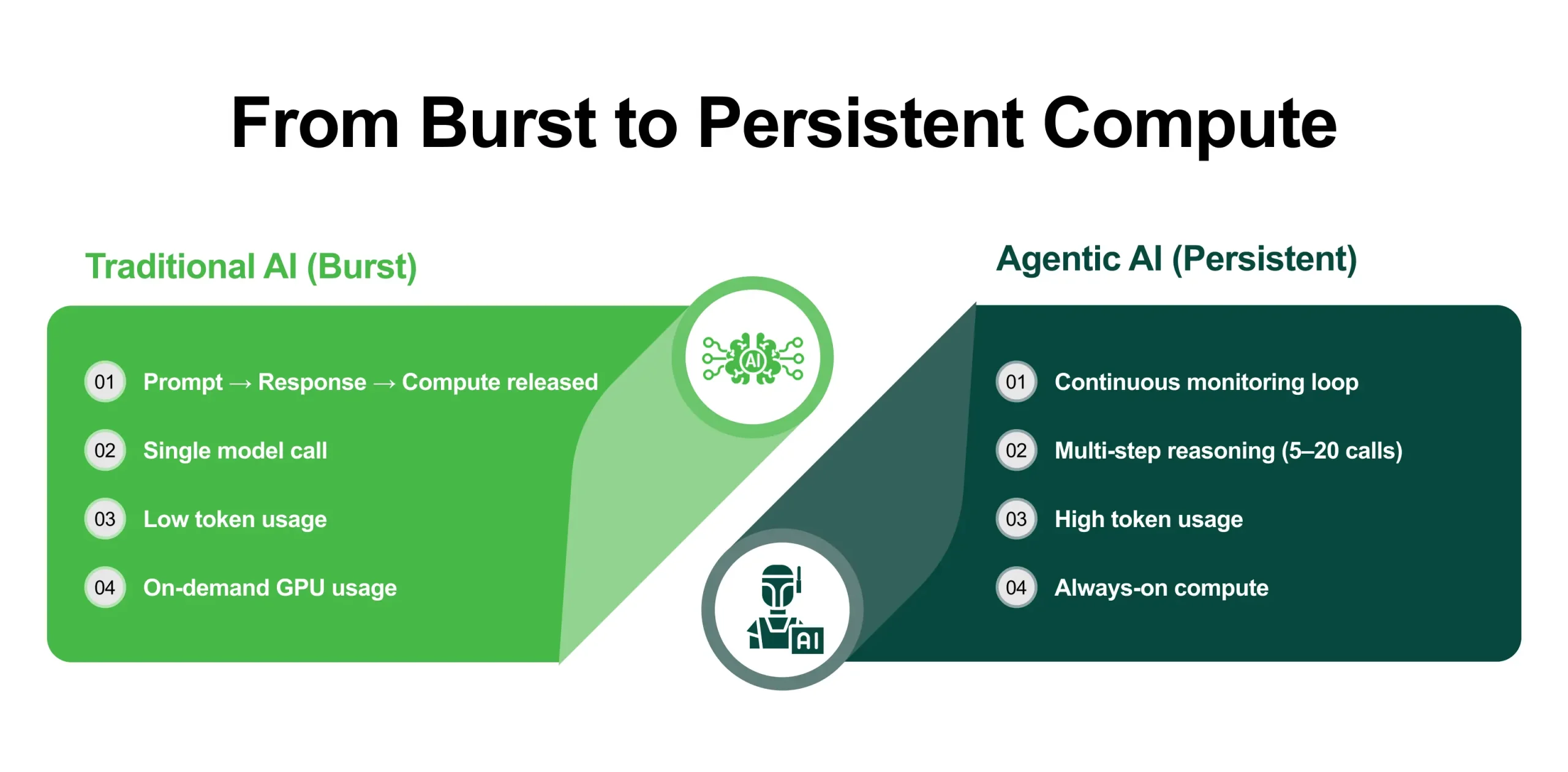

The defining change introduced by agentic systems is the shift from request response computing to persistent compute environments.

Traditional AI systems behave like transactional services. A user sends a prompt, the model generates a response, and compute resources are released. Each interaction typically consumes only a few seconds of GPU time.

Agentic systems operate very differently. Instead of responding to individual prompts, autonomous agents run continuously. They monitor data streams, evaluate signals, trigger actions, and determine whether further reasoning is required.

This means infrastructure remains allocated rather than being released after each task.

- Multi Step Reasoning and Tool Use

Another major difference lies in how agents process decisions.

Traditional inference usually involves a single model call. Agentic workflows often require multiple steps. An agent may retrieve information, validate it, generate intermediate reasoning, and refine its response before completing an action.

In enterprise deployments, a single outcome can involve 5 to 20 model calls, significantly increasing token consumption and compute demand.

Agents also interact with enterprise tools such as databases, APIs, analytics platforms, and search systems. These interactions require orchestration layers that coordinate communication between models and enterprise infrastructure.

These layers typically include:

- Vector databases for semantic search

- Workflow engines for managing agent tasks

- Tool registries that expose APIs

- Monitoring systems for agent activity

Each component increases infrastructure complexity and operational overhead.

- Persistent Context and Parallel Execution

Agentic systems also maintain context across sessions. Instead of processing isolated prompts, models repeatedly evaluate accumulated reasoning steps and historical context.

As deployments grow, this persistent memory layer increases both token consumption and infrastructure demand.

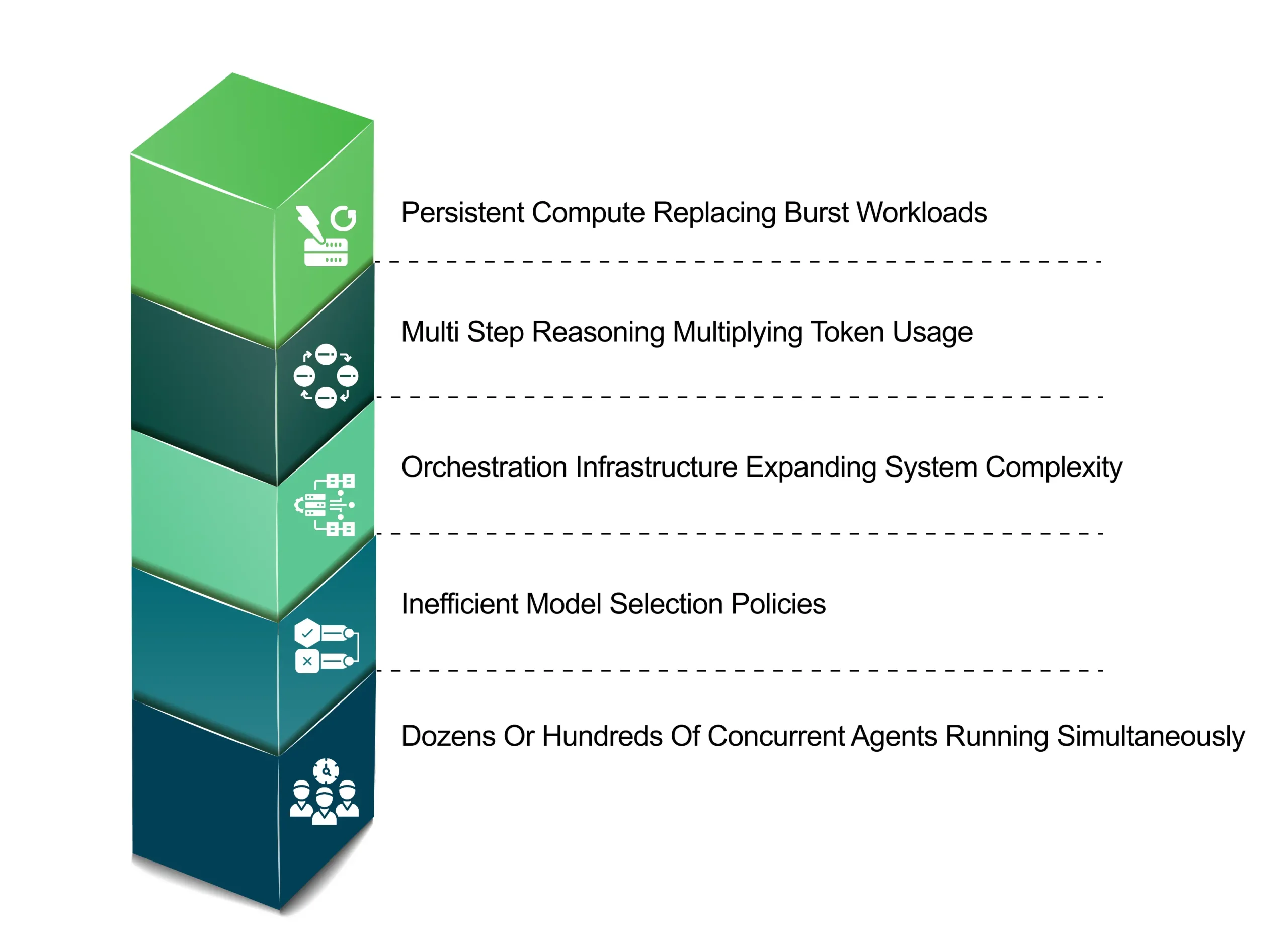

At enterprise scale, the challenge compounds further because organizations rarely deploy a single agent. Most environments run dozens or even hundreds simultaneously across departments.

The implication is clear.

Agentic AI does not consume compute like a tap that turns on and off. It behaves more like a pump that runs continuously.

2. The Cloud Bill Nobody Modelled For

Many enterprises discover the financial implications of agentic systems only after deployments move into production.

During pilot phases, costs appear manageable. But once autonomous workflows scale, infrastructure consumption increases far faster than expected.

Several factors drive this cost expansion.

- Token Explosion

Multi step reasoning chains dramatically increase token usage.

While a traditional prompt interaction may consume a few thousand tokens, agentic systems can trigger hundreds or thousands of model calls during complex workflows.

Research suggests that multi-agent reasoning pipelines can consume 10 to 50 times more tokens per outcome compared to single turn inference tasks, significantly increasing infrastructure costs at scale.

- Idle but Running Compute

Public cloud GPUs are typically billed hourly.

If an agent reserves compute resources but spends time waiting for external APIs or internal data queries, billing continues even when the GPU is not actively performing inference.

Persistent autonomous workloads therefore generate large amounts of reserved infrastructure usage.

- Orchestration Infrastructure

Agent frameworks such as LangChain, LangGraph, and AutoGen introduce additional infrastructure layers.

These commonly include:

- Memory stores for maintaining context

- Vector search engines for retrieval systems

- Event streaming pipelines for agent triggers

- Monitoring platforms for operational visibility

Each component adds cost and operational complexity.

- Model Routing Without Governance

Many enterprises initially route every task to the most capable model available.

However, only a small percentage of tasks require frontier models. Simpler operations such as classification, summarization, or structured extraction can often be handled by smaller models at significantly lower cost.

Without intelligent routing policies, organizations end up paying premium inference costs unnecessarily.

- Why Finance Teams Are Getting Surprised

Several factors combine to create the cost shock many enterprises experience:

When these factors combine, infrastructure consumption can grow rapidly.

Organizations that initially budgeted ₹10 to ₹15 lakh per month for inference often report spending ₹40 to ₹80 lakh once agentic workflows scale across departments.

The compute demand existed in the architecture all along. It simply remained invisible during the pilot stage.

- Traditional AI vs Agentic AI Infrastructure

3. What This Means for Infrastructure Architecture

The rise of autonomous agents forces enterprises to rethink several infrastructure decisions.

Three in particular determine whether agentic AI deployments remain economically sustainable.

- Decision 1: Where Agents Run

Public cloud GPUs work well for experimentation and burst workloads. However, agentic systems run continuously. For persistent workloads, dedicated GPU infrastructure can offer more predictable costs compared to variable usage based cloud billing.

- Decision 2: How Models Are Routed

Not every task requires frontier models.

A multi-model routing layer ensures that complex reasoning tasks are handled by advanced models while simpler tasks are routed to smaller and cheaper alternatives automatically.

Industry analyses show that intelligent model routing and optimization strategies can reduce enterprise AI inference costs by roughly 30–50%, depending on workload complexity and model selection policies.

- Decision 3: How Agent Activity Is Governed

Autonomous systems require detailed operational visibility.

Infrastructure governance requires monitoring capabilities such as:

- Token consumption tracking

- Model usage analytics

- Tool interaction logs

- Agent decision histories

These capabilities transform autonomous AI from a black box into a manageable operational platform.

4. The Neutrino Approach Built for Agentic Economics

Many organizations discover the infrastructure challenges of agentic deployments only after the systems are already running.

The CtrlS Neutrino framework is designed to address these issues earlier in the lifecycle.

- Intelligent Multi Model Routing

Neutrino dynamically routes tasks across models such as GPT, Claude, DeepSeek, Qwen, and Llama based on task complexity. Advanced models handle complex reasoning while simpler tasks are automatically directed to smaller, lower cost models. This happens without requiring code changes, helping reduce inference costs across large agent deployments.

- Full Observability

Neutrino’s Business Insights module provides end to end visibility into agent activity. Every model call, tool interaction, and decision is logged and traceable, enabling organizations to monitor token usage, track model performance, and detect cost anomalies.

- Flexible Deployment Architecture

Neutrino is infrastructure agnostic and can run across public clouds or dedicated GPU private cloud environments. This allows organizations to shift persistent workloads to environments that offer predictable costs, lower latency, and stronger data sovereignty.

5. A Practical Starting Point

Enterprises planning their next agentic deployment can avoid many cost surprises by taking a few proactive steps.

- Map the full agent architecture

Document every model call, tool interaction, and data exchange in the workflow.

- Define routing policies before scaling

Determine which tasks require frontier models and which can use smaller models.

- Evaluate infrastructure economics early

Persistent workloads may benefit from dedicated GPU infrastructure rather than variable hyperscaler pricing.

These steps help organizations scale agent networks without unexpected cost escalation.

6. Concluding Note

Agentic AI marks a shift from prompt based systems to continuously running autonomous agents that monitor events, reason across multiple steps, and interact with enterprise tools in real time.

This turns compute into a persistent workload, making infrastructure planning essential for cost control and scalability.

Platforms like CtrlS Neutrino, supported by CtrlS GPU Private Cloud, help enterprises run agentic workloads with the performance, governance, and cost predictability needed at scale.

Srini Reddy, EVP & Head - Service Delivery, CtrlS Datacenters

With over 25 years of experience in the IT industry, Srini is a seasoned leader in cloud and IT infrastructure solutions. At CtrlS, he is responsible for the overall operations, and customer service delivery. Srini holds a strong track record of leading and managing cross-geography teams and partners, delivering key business and technology transformations. His extensive expertise spans program and project management, as well as IT service management, IT strategy, and quality management.